In the world of artificial intelligence, processing sequential data such as time series, speech signals, and natural language processing has been a challenging task. While feedforward neural networks have proven to be good classifiers for problems with fixed inputs and outputs, they are not flexible enough to handle variable-length sequences. To address this challenge, researchers and practitioners developed a family of Recurrent Neural Networks (RNNs) that capture the complex temporal dependencies in such data.

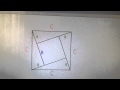

One of the earliest and most important RNNs is the Elman Network, introduced by Jeffrey Elman in 1990. This simple feedback loop architecture maintained a hidden state to process sequential data and introduced a ‘context layer’ that updated the hidden state every time step. This context layer serves as a ‘memory’ of the network's previous inputs, allowing it to capture medium-term dependencies in the sequence.

The Elman Network has been successfully applied in a range of real-world applications, including finance, weather forecasting, and predictive text language models. For example, it has been used to automate market making spreads, determine portfolio manager asset allocation, and predict the next word or token in a sequence.

However, Elman Networks are not without their challenges. One of the most significant is the vanishing and exploding gradients problem, which occurs during backpropagation when gradients are propagated through time from the output back to the first time step. This means that the gradients have to be multiplied by the same weight matrix at each time step. If the weight matrix has eigenvalues that are either greater or less than one, the gradients can become very small (vanishing) or very large (exploding) over time, making it difficult to learn long-term dependencies.

Another challenge is difficulty in capturing long-term dependencies. While the context layer in Elman Networks helps capture medium-term dependencies, the values eventually fade, making it difficult to maintain a memory of previous states over very long sequences.

These challenges led to the development of more modern architectures, such as the Long Short-Term Memory (LSTM) network. LSTM networks overcome the vanishing and exploding gradients problem by using a gating mechanism to selectively forget or remember information. They also have a more sophisticated memory mechanism that allows them to capture long-term dependencies.

In this video, we explored the evolution of neural networks with a focus on the Elman Network. We saw how it used a feedback loop architecture and a context layer to maintain a hidden state for capturing medium-term dependencies in the sequence. We discussed some of the real-world applications of Elman Networks, including finance, weather forecasting, and predictive text language models. We also identified some of the challenges associated with Elman Networks, such as the vanishing and exploding gradients problem and difficulty capturing long-term dependencies.

We also mentioned that the next video in this series will focus on the LSTM network, a more modern architecture that overcomes the challenges associated with Elman Networks. Finally, we provided links to resources to get viewers up to speed on AI and neural networks.

Links to playlists:

Neural Networks in 60 seconds: https://youtube.com/playlist?list=PLaJCKi8Nk1hxNSMM8FSWCWsScstfutKGn

Neural Network Primer: https://youtube.com/playlist?list=PLaJCKi8Nk1hzqalT_PL35I9oUTotJGq7a